Musing 3: Blockchain, LLMs and Autonomous Agents

Interesting ideas out of the Robotics Group at the University of León, Spain

Robotics has always been an interesting, challenging and fun pursuit in AI. Once ChatGPT emerged, it was only a matter of time before we would start seeing its applications in robotics. This arXiv preprint out of Spain is not the first paper to do so, nor would I argue is the most interesting or groundbreaking, but I picked this one for today’s musing because it uses a set of hot ideas (see the title) and shows how they could be used to try and address an important problem: improving the trustworthiness and safety of autonomous agents in environments involving human interactions.

The use of autonomous agents (think “mobile robots”) is only going to become more commonplace as the technology becomes cheaper and better. As a result, it becomes crucial to explain the reasons behind such agents’ actions, especially to those who are not experts, to build trust and safety and prevent mistakes or misunderstandings. These explanations also help to improve communication and make interactions more effective.

This paper introduces a system designed for ROS-based mobile robots that focuses on accountability and explainability. ROS stands for Robot Operating System and is an open-source framework for robot software development, providing a collection of tools, libraries, and conventions that aim to simplify the complex process of creating robust and versatile robot behavior across a wide variety of robotic platforms.

The system is made up of two key parts: one is a secure, unalterable record-keeping component that uses blockchain technology, and the other is responsible for creating explanations in everyday language, using Large Language Models (LLMs) to analyze the data recorded.

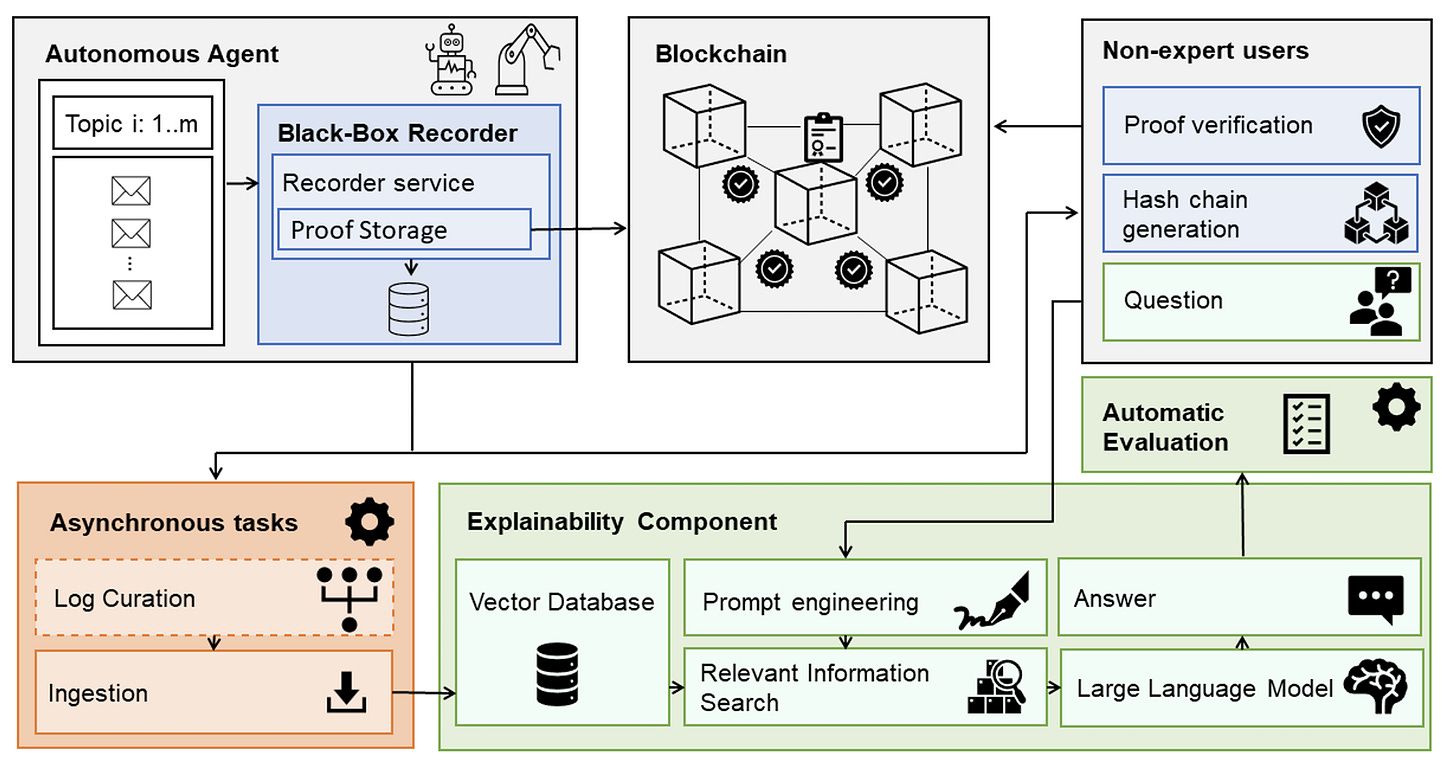

Some specific findings and contributions from the paper (the figure below, which is taken from Fig. 1 of the paper, is also a handy reference for seeing how all of these come together):

Security Enhancements for Autonomous Agents: The study emphasizes the importance of security in the deployment of autonomous agents, particularly in public spaces where interactions with humans are frequent.

Architecture for Accountability and Explainability: A novel architecture is proposed, consisting of two main components:

A "black box" element that ensures accountability through anti-tampering features enabled by blockchain technology. This component serves as a secure log system, preserving the integrity, confidentiality, and availability of the agent's data and actions.

A component that generates natural language explanations using Large Language Models (LLMs) based on the data recorded in the black box. This approach aims to make the autonomous agents' decisions and actions understandable to non-expert users, thereby enhancing trust and communication between the agent and the user.

Use of Blockchain for Immutable Logs: The authors discuss the use of blockchain technology as a means to ensure the immutability of logs and protect against unauthorized modifications. This approach enhances the trustworthiness of the logging system by enabling the detection and isolation of faulty behaviors and their origins.

Large Language Models for Natural Language Explanations: They use LLMs to generate comprehensible natural language explanations from the recorded data. This technique is crucial for diagnosing failures in goal achievement and making the explanations accessible to humans, thereby contributing to the development of “Explainable Autonomous Robots” or XAR.

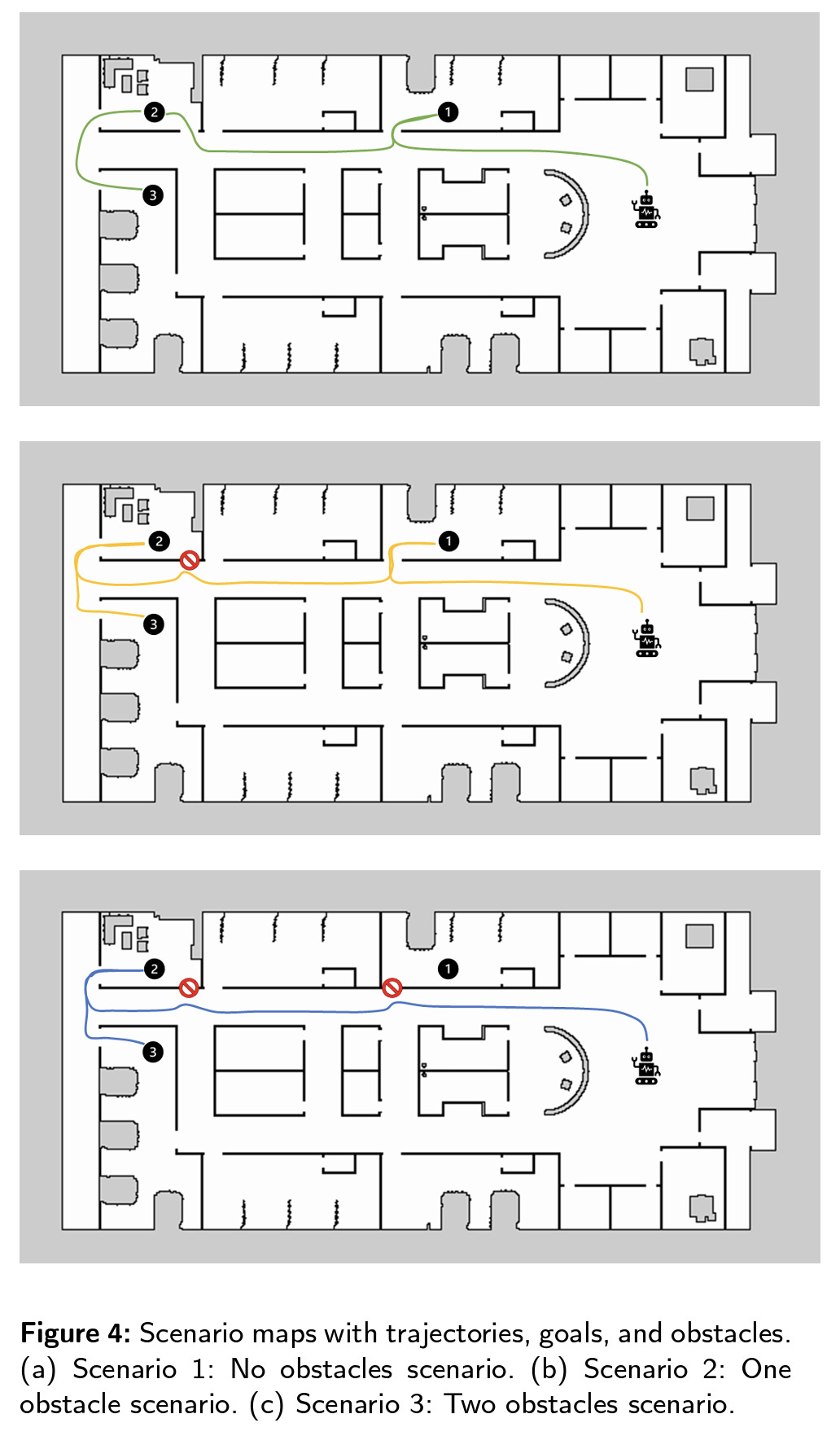

Experimentally, the authors test the performance of this system is tested in three scenarios involving the navigation of autonomous agents, assessing its ability to provide responsible and clear explanations based on the robot's actions. One of the things I enjoyed in the paper is that the authors are not just being abstract: they do the hard work of implementing the architecture in ROS-based mobile robots and then evaluating it. This map below explains these scenarios nicely:

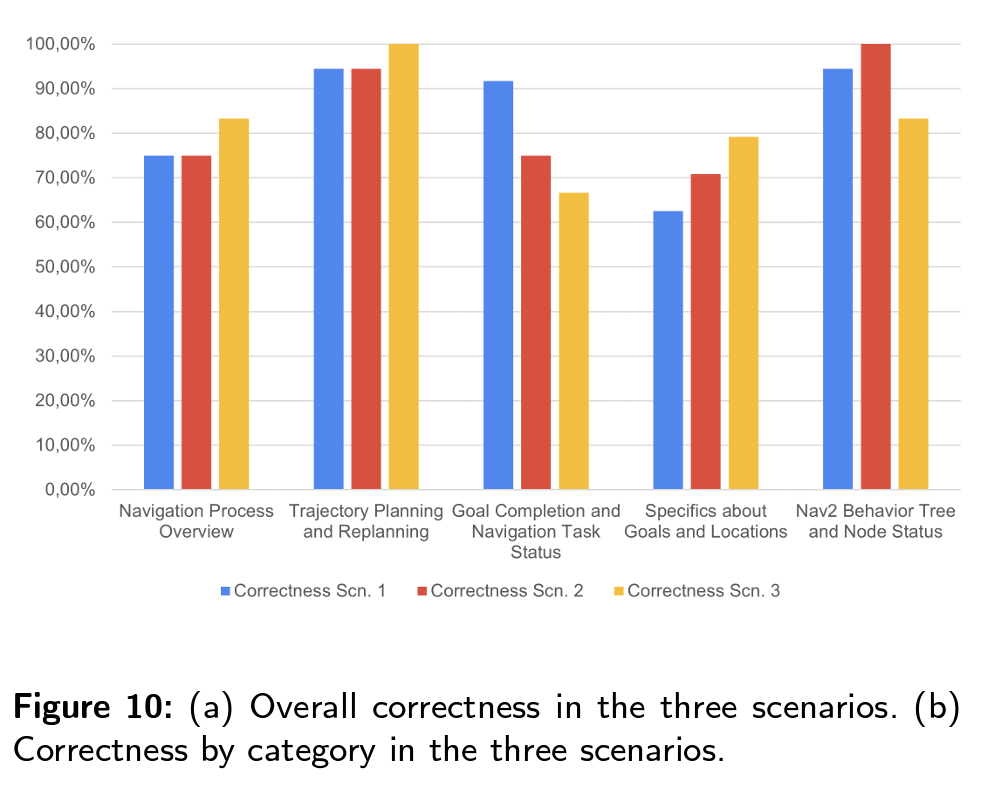

Experimentally, the results were very promising. Obviously, Scenario 3 is the hardest scenario, but even there, the robots were not completely failing. Hence, they offer some evidence of effectiveness even in challenging real-world situations. However, like any research paper, it also shows how much further we still need to go and that this is, by no means, a solved or settled problem. Most of the results were expressed using graphs like the one below, so it’s hard to glean things like statistical significance and robustness, which unfortunately seems to not have been thought through as much in this study.

Here are some of my own thoughts about this paper:

It is a little harder to read, even for me, but that is probably because I am more of a knowledge graphs person than one with any experience in robotics. I thought the figures were high quality, but I also sometimes felt that too many things were going on at the same time (although I didn’t cover it, they also threw in Retrieval Augmented Generation or RAG in their approach). I wonder, for instance, whether blockchain is necessary to this paper. There are definitely some merits: the “black box” component, enhanced by blockchain technology, demonstrated strong anti-tampering properties. This ensured the integrity and reliability of the logged data regarding the robot's actions, enabling accurate post-event analysis and fostering trust. However, for most ordinary robots that would be deployed like in the maze-navigation examples they used, I’m not sure tampering with event or log data is such a serious problem.

The experiments revealed challenges inherent to autonomous agents in real-world scenarios, such as dealing with noisy and semi-structured log data. Despite these challenges, the architecture provided a solid foundation for enhancing the trustworthiness autonomous agents through accountable and explainable functionalities.

The component that leverages LLMs successfully generated coherent, accurate, and understandable natural language explanations based on the data recorded in the black box. These explanations were accessible to non-expert users, effectively bridging the communication gap between the autonomous agents and users. Personally, I found this to be the most exciting finding (but I’m biased) as it suggests that research in artificial embodied cognition is on the cusp of taking off. Maybe we should all start playing with some robots and learning the innards of ROS.